Platform

Checking...

Optimized for macOS 12 and above.

Local AI, Engineered for Inference

A complete local AI inference stack.

Modular, offline-first, and built for developers who need full control.

A complete local AI inference stack. Run AI where your data lives.

No products in this filter yet.

Reason locally. Act intelligently.

A high-performance local LLM inference engine for reasoning, agents, and tool-calling workflows.

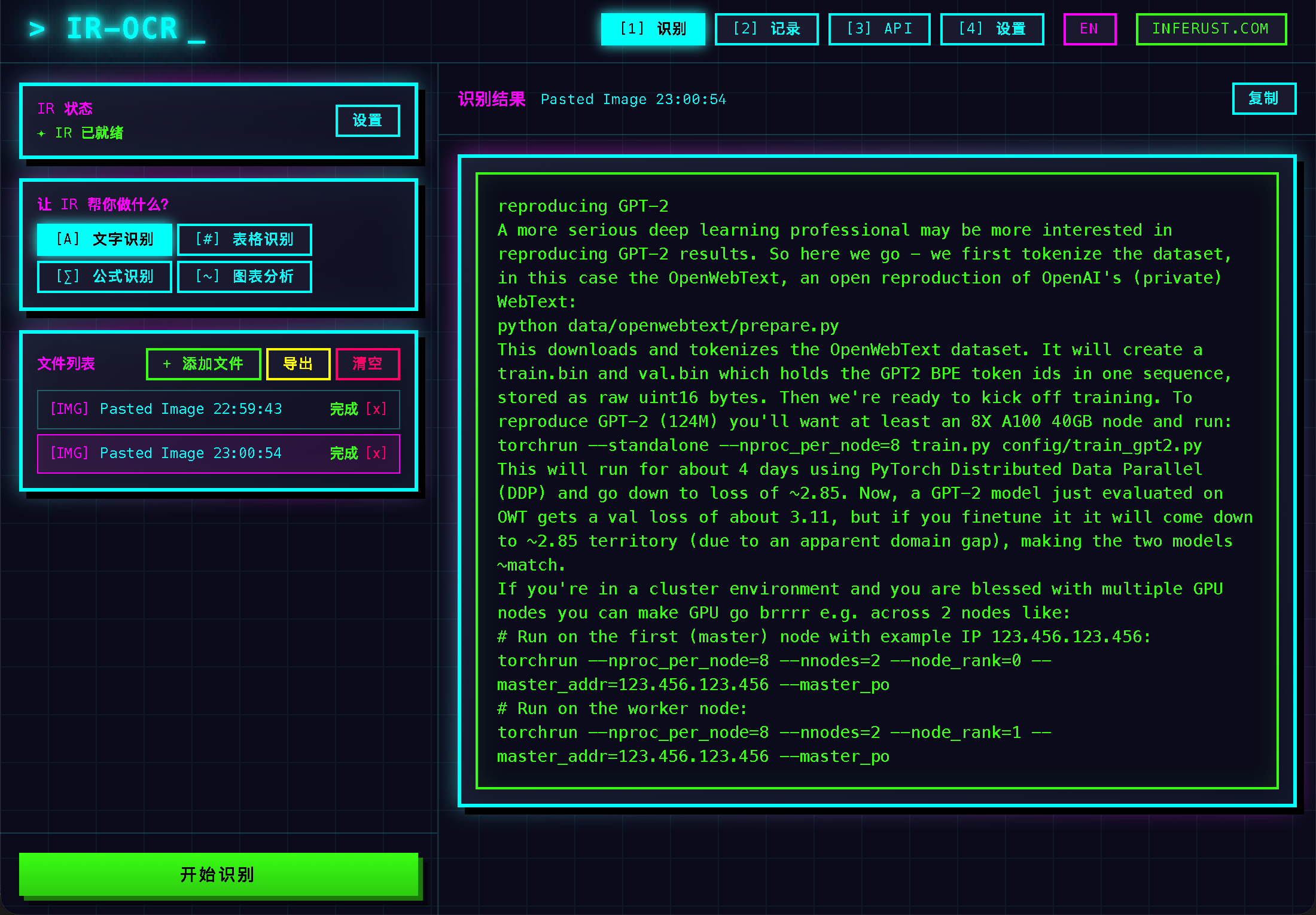

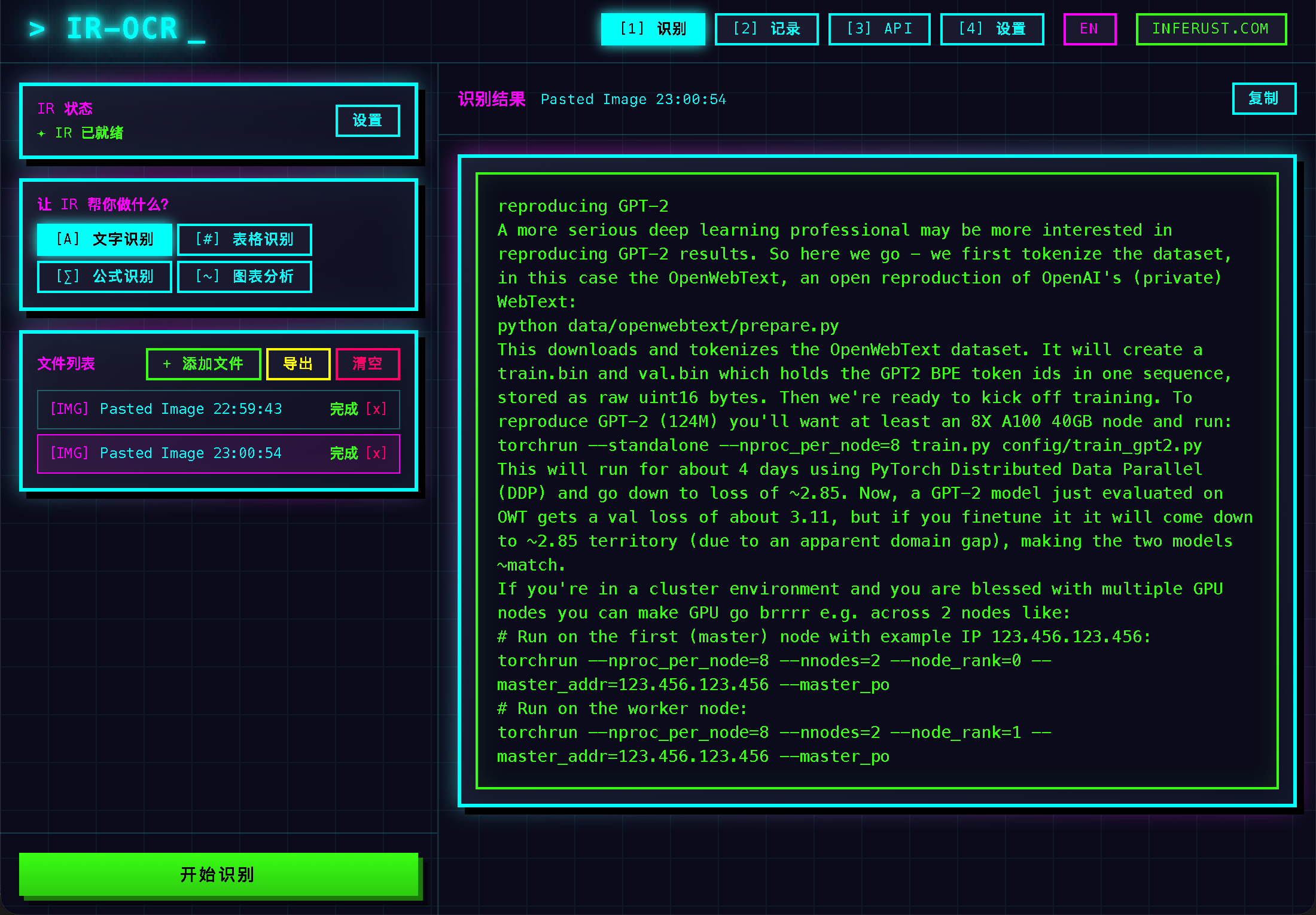

Turn pixels into structured text.

A robust OCR engine for documents, PDFs, invoices, and multi-language text - optimized for local execution.

DOWNLOAD v0.1.0Listen once. Understand instantly.

Fast and accurate speech-to-text for real-time and batch audio, fully local and privacy-preserving.

Give your AI a natural voice.

Neural text-to-speech with expressive, controllable voices - no cloud required.

Create visuals from pure intent.

A local image generation engine for creative workflows, design iteration, and AI-assisted visuals.

Quickly verify whether your current device matches the best local runtime profile.

Checking...

Optimized for macOS 12 and above.

Checking...

Apple Silicon (M1-M4) gives best acceleration.

Checking...

8GB+ unified memory is recommended.

Checking your environment...

Try a browser-based OCR preview. Upload an image or switch sample scenes to see extracted text.

Choose a sample or upload your own image to test OCR extraction behavior.

No image uploaded yet. Using sample preset.

This browser demo uses lightweight simulation. Inferust Optix desktop provides full OCR accuracy and layout parsing.

OCR output will appear here.

Tip: filenames containing "invoice", "receipt", or "id" will auto-match sample templates.

Validated local AI workflows for production teams.

DOCUMENT AUTOMATION

Extract invoices, contracts, and forms into validated records without sending data to external APIs.

SUPPORT AI

Use local RAG and ASR to accelerate internal support while keeping logs and transcripts on-device.

CREATIVE STUDIO

Generate scripts, voiceovers, and key visuals locally for faster campaign experimentation.

A transparent release track for the full local AI stack.

Delivered desktop OCR package and launch-site demo with bilingual onboarding and analytics baseline.

Focus on local tool-calling, quantized model packs, and deployment profiles for Apple Silicon teams.

Ship speech, voice, and image components with unified local orchestration and enterprise policy controls.

Recent release notes, engineering writeups, and launch highlights.

A practical blueprint for selecting models, sizing hardware, and shipping secure local inference workflows.

Read articleInitial public build for Apple Silicon includes invoice OCR, layout parsing, and local runtime optimization.

Download packageHomepage now includes solution templates, roadmap milestones, and a dedicated blog index for ongoing updates.

Open blog indexEverything needed to evaluate, launch, and scale local AI products.

Follow release decisions, architecture deep dives, and implementation walkthroughs.

Explore blog PlaybookSee recommended rollout stages for pilot teams, security review, and production hardening.

Read playbook ChangelogWatch product status changes across Optix, Cortex, Audix, Voxa, and Imagix.

View updates ContactShare your stack and target scenarios to get tailored rollout recommendations.

Contact InferustAnswers to common questions about local deployment and product roadmap.

Yes. Inference runs locally so your data does not need to leave your device.

Planned products are designed around open formats such as GGUF and Safetensors for flexible local workflows.

Inferust Optix is available now, while Cortex, Audix, Voxa, and Imagix are in active development.

You can contact the team via email and share your use case to get roadmap updates and early access opportunities.